Exploring the influence of AI and Synthetic Data at ESOMAR NA 2025

This year’s ESOMAR North America conference highlighted a central theme: exploring the landscape of research as it is increasingly shaped by AI and synthetic data. Whether leveraging AI for generating outputs or synthetic data for forecasting election results, there we see a strong a connection to how Paradigm approach’s data quality in quantitative sampling.

AI’s Influence on Human Data

AI has a tendency to favor the “mean,” smoothing over the nuance that makes human responses unique. Human8 spoke to this directly, warning that AI-generated outputs often feel “bland” when they attempt to appeal broadly.

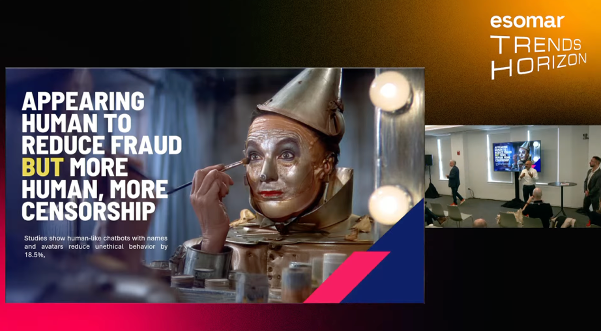

Ipsos brought this idea to life, exploring how even the perceived attractiveness or character of an AI bot can subtly steer respondents’ answers. For example, they gave a nod to the Wizard of Oz question, “What if you gave the Tinman a heart?” This reinforced the idea that human behavior is rarely neutral and that AI cannot replace thoughtful human oversight.

Synthetic Data Challenges

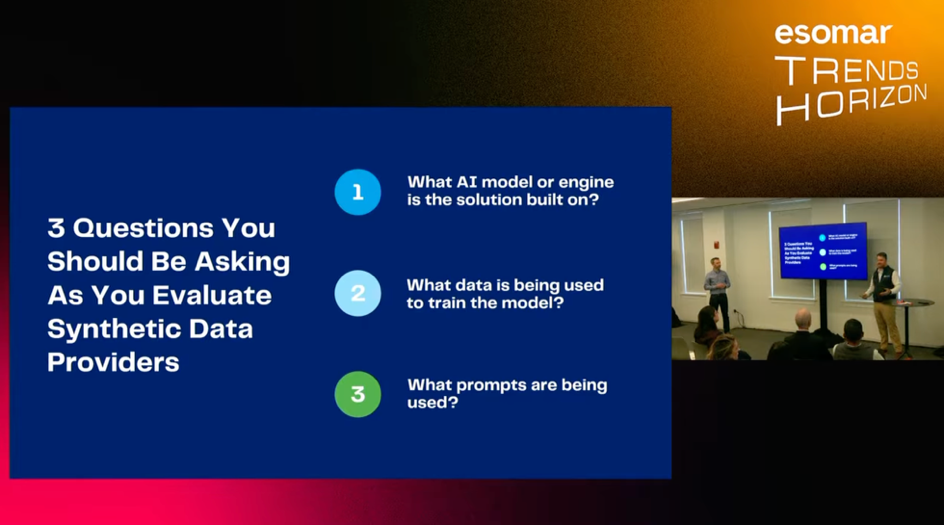

There is an uptick in demonstrating where Synthetic data can be valuable. Vendors like Quest Mindshare demonstrated how tools such as ChatGPT and Grok could be used to forecast elections, while EMI raised caution about the “truth” behind synthetic datasets versus in-the-moment sampling.

The discussions revealed both excitement and skepticism: synthetic data can reveal patterns and trends, but it cannot fully capture the richness and immediacy of real respondents’ perspectives.

Demographics and Bias in AI

A related theme emerged around bias in AI tools themselves. For example, Grok’s outputs skewed more right-leaning and reflected behaviors less likely to turn out in actual elections, while ChatGPT exhibited different demographic and behavioral tendencies. We continue to see the pitfalls of AI-driven data when it carries its own embedded assumptions and biases.

Paradigm’s Point of View

Synthetic data and AI are powerful accelerators, but only when guided by human insight. The industry’s shift toward automation and data generation has unlocked new efficiencies and ways to create better outcomes. We are excited to continue to evolve our expertise and capabilities as we continue to see strides in AI and Synthetic data innovation. However, it also raises critical questions about authenticity, bias, and respondent integrity.

Our approach is grounded in a human-in-the-loop model, where AI supports, but never replaces, the expertise and voice of people. This ensures that every insight is rooted in human truth, not just algorithmic patterning. We adhere to our GDQ (Global Data Quality) Pledge and evolve our Veraproof Suite, which provides layered validation, respondent verification, and bias detection across every stage of fielding.

As we build on the expertise and energy shared at ESOMAR NA 2025, one thing is certain: The future of research will belong to those who balance innovation with intention. Where technology enhances, not replaces, the pursuit of truth.